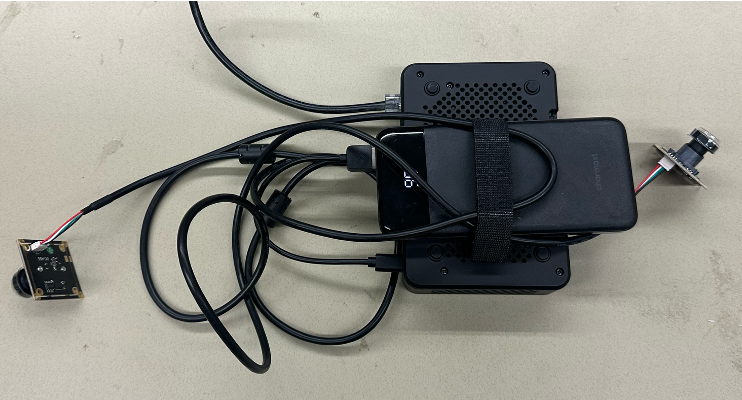

University of Iowa BME Senior Design project. BikeBuddy combines Raspberry Pi hardware, real-time computer vision, and LLM-powered analysis to improve biking safety for children by identifying nearby hazards and providing timely auditory and haptic alerts.

Overview

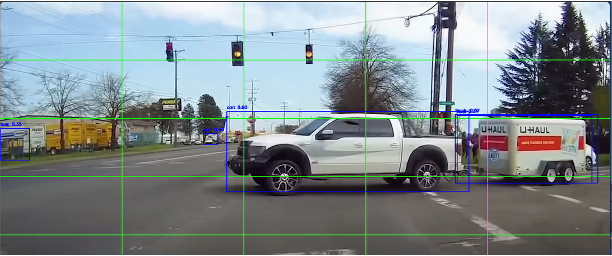

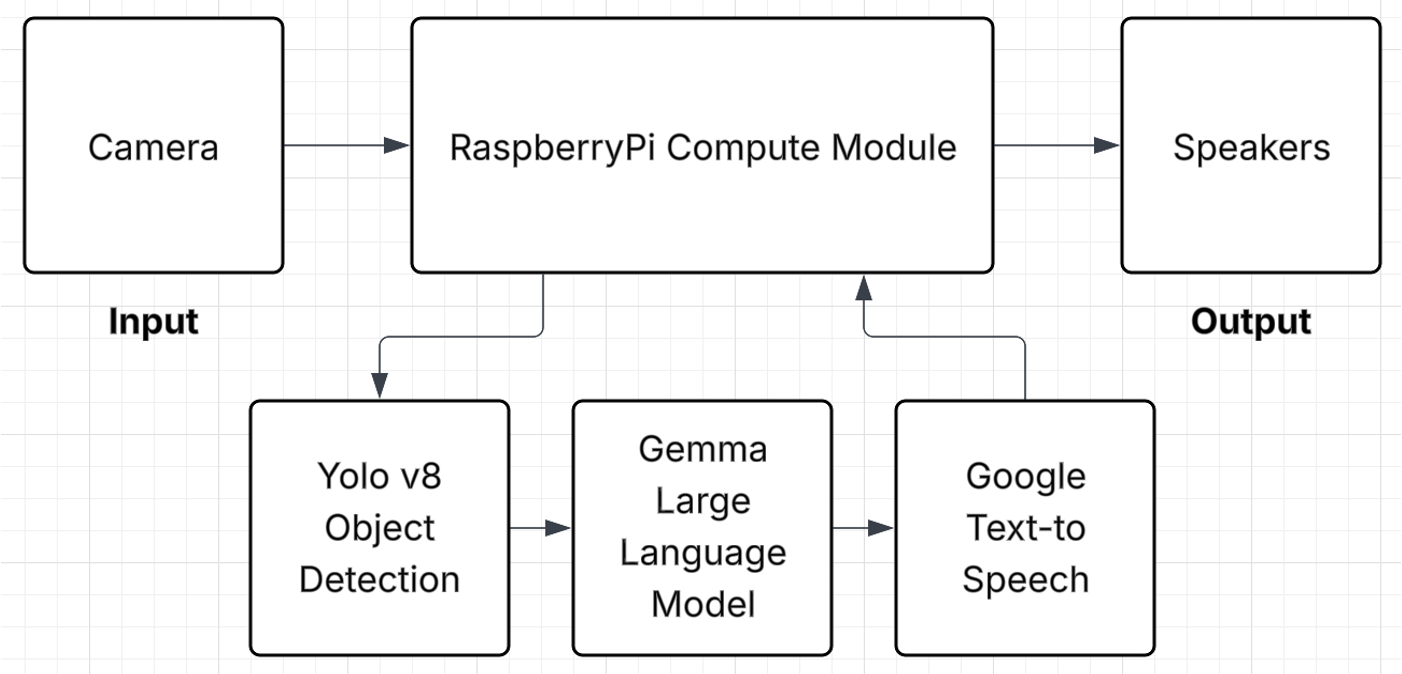

The system uses onboard cameras and object-detection models to recognize vehicles, obstacles, and other hazards in the rider's environment. When a hazard is detected, the system delivers real-time alerts through audio feedback so the child can react without looking away from the road. An LLM layer adds explainability, helping riders understand what was detected and why an alert was triggered. The work integrates embedded hardware, computer vision pipelines, and natural language outputs into a single prototype that is both technically robust and user-friendly.

Evaluating project success: Eye Gaze Detector

To measure whether the system actually improved rider attention and hazard awareness, we built an Eye Gaze Detector for video analysis. The tool uses OpenFace to compute eye gaze vectors, segment videos into quadrants, and determine which quadrant the subject is looking at. By running it on footage of riders using BikeBuddy versus baseline conditions, we could quantify whether alerts led to more time looking at the road and faster reactions to hazards. This gave us a concrete, data-driven way to evaluate project success beyond subjective feedback.

GitHub: NikHerdt/EyeGaze_Detector

Gallery

Source

GitHub: adervesh03/BikeBuddy (Senior Design Team 9: Ashwin Dervesh, Nikhil Herdt, Nirvik Mitra)